This series of articles is an irreverent, tongue-in-cheek look at the serious business of risk management and compliance and the lack of scientific rigor dressed up in charts and graphs, which have an appearance of legitimacy, but tell us little about risks.

First of all, let me say that risk management and compliance are important functions and deserve to be taken as seriously as any other discipline in business and government to ensure efficient operational outcomes. My point in these articles is to point out where many firms diverge from serious risk management into the realm of mystery cloaked as rigor.

Victim #3 – Data, Risk Management and Prediction

In 1814, Pierre-Simon de Laplace, a Newtonian physicist, wrote:

“If an intelligence, at a given instant, knew all the forces that animate nature and the position of each constituent being; if, moreover, this intelligence were sufficiently great to submit these data to analysis, it could embrace in the same formula the movements of the greatest bodies in the universe and those of the smallest atoms: to this intelligence nothing would be uncertain, and the future, as the past, would be present to its eyes.”

Laplace was describing an ideal that defined the state of the world in present terms as determinant of how precisely the future will unfold.[1] Deterministic models of the world assume that events unfold in an orderly manner with relative precision in terms of consequence and/or cause.

For example, insurance company actuarial models are based on the assumption that historical losses for workers’ compensation, auto and other industrial accidents will progress along a continuum that is predictable based on past losses. Actuaries smooth volatility in the data to minimize the impact of large losses over a small number of accidents skewing historical trends. To the credit of insurance companies, annual premiums and predictions of future losses are reset to adjust for new trends caused by environmental and operational changes in baseline metrics. However, small changes in interest rate assumptions or improvement in risk factors may cause large swings in outcomes. Therein lies the problem with determinism. It also explains why we are surprised when results do not meet expectations.

Today’s risk professionals continue to embrace Laplace’s view of the world as described by the axiom “history repeats itself.” But does it? Several conditions would be required for history to repeat itself. The first condition assumes that human behavior conforms to orderly laws of nature. Secondly, determinism is based on perfect data from which the future can be predicted with accuracy. And lastly, it assumes that the tools we use to make predictions possess the computing power to make sense of disparate data with unequivocal precision.[2]

Industries who take risks with other people’s money — for instance, insurance, mutual funds, hedge funds, banks and broker-dealers — are based largely on deterministic models. The clients of these respective firms assume the risks inherent in the inaccuracy of their models, allowing a few firms to become very large without exhibiting comparable results found in index funds. Short-term success in perceived accuracy of predictive ability help firms find new clients, reinforcing a virtuous cycle of new money.[3]

This partly explains why during the early 2000s a large number of hedge fund managers found new money to invest, but now see a massive reversal of fortunes, as well as increased fund closure in today’s more challenging markets. This is also why professional sports teams change coaches and corporations change CEOs when results fail to meet expectations. Randomness, disguised as a hot streak, genius incarnate or poor decision-making may be nothing more than bad timing from extenuating circumstances. The CEOs of Enron and Tyco were celebrated as genius leaders who created innovative new business out of old-fashioned industries — that is, before they served jail time for fraud and financial accounting scandals.

But what does this have to do with data, risk management and prediction?

What should be evident is that data science, risk management and prediction are separate disciplines that continue to evolve into increasingly useful tools for answering tough questions about cause and effect related to risks. As technology advances at increasing rates of speed, data, risk management and prediction will enhance decision making more efficiently than intuition or experience. However, it also assumes that risk management is a “science of discovery,“ not simply an extension of an accounting or internal controls framework.

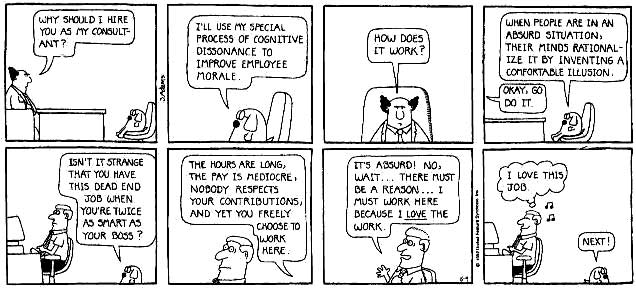

Risk management has lost credibility by over-promising and misdirecting senior management with expectations of “seeing around corners” or “applying brakes on sports cars so the driver can go faster.” These simple analogies are myths used to sell solutions with little substance. One of the biggest myths is that everyone in the organization is a responsible for risk management. This statement is the ultimate delusion.

Making everyone responsible for risk management means no one is ultimately responsible for managing risk. For example, what happens when senior executives choose between risk management and bonus-enhancing financial choices? Risk “management” becomes important after the damage has been done.

Risks are malleable and change depending on your circumstances. The risks important to a CEO are vastly different from the risk experience of an entry-level IT professional, yet their risk paths are intricately linked. What is more applicable is how individual decisions by each person in the organization contribute to the risk profile of the firm.

Someone must be responsible for risk discovery, as well as the strategies to understand how risk exposures evolve; that someone should be a risk professional. No one can do this flawlessly. Imagine the iterations of calculations needed to analyze how these collective decisions impact the firm!

Humility should be more evident in our assumptions about risk and failure, with each event presented as a learning opportunity for fine-tuning our understanding of data, risk management and prediction absent excessive praise or condemnation.[4] We seldom have all the appropriate data or the computing power to process data with the accuracy that is required, but that doesn’t mean we shouldn’t continue to try.[5]

[1] Mlodinow, Leonard, “The Drunkard’s Walk, How Randomness Rules Our Lives” (New York, New York, Vantage Books, a division of Random House, Inc., 2008)

[2] http://www.sciencedirect.com/science/article/pii/S188319581000112X

[3] https://blog.rjmetrics.com/2013/02/06/the-5-most-common-data-analysis-mistakes/

[4] https://asaip.psu.edu/Articles/measurement-errors

James Bone’s career has spanned 29 years of management, financial services and regulatory compliance risk experience with Frito-Lay, Inc., Abbot Labs, Merrill Lynch, and Fidelity Investments. James founded Global Compliance Associates, LLC and TheGRCBlueBook in 2009 to consult with global professional services firms, private equity investors, and risk and compliance professionals seeking insights in governance, risk and compliance (“GRC”) leading practices and best in class vendors.

James Bone’s career has spanned 29 years of management, financial services and regulatory compliance risk experience with Frito-Lay, Inc., Abbot Labs, Merrill Lynch, and Fidelity Investments. James founded Global Compliance Associates, LLC and TheGRCBlueBook in 2009 to consult with global professional services firms, private equity investors, and risk and compliance professionals seeking insights in governance, risk and compliance (“GRC”) leading practices and best in class vendors.