The COVID-19 pandemic has proven an inflection point for the use of AI and ML technology in the health care sector. Regulators across jurisdictions have provided guidance, but many uses and implementations of AI remain in their infancy. To navigate this legal quagmire, boards will need to use every tool and resource at their disposal.

Health care industry investment in artificial intelligence (AI) and machine learning (ML) technology shows no sign of slowing down, especially on the heels of a record-breaking year for digital health funding and the two-year long pandemic that both catalyzed adoption of AI-driven technology and provided a perfect showcase for its utility across the industry.

Rightfully so, AI utilization is starting to take center stage in hospital and health system boardrooms as more and more organizations look to broaden the adoption of AI and ML technologies to solve some of their biggest challenges.

Hospitals and Health Systems Are Leaning into AI and ML

Just two years ago, a Sage Growth survey revealed that only half of hospital leaders were familiar with the concept of AI and more than half could not name a specific AI vendor or solution. According to their latest survey (conducted between September and December 2020), however, hospital executives are leaning into and prioritizing the adoption of AI automation technology now more than ever. Some have already seen a return on investment (ROI) through reduced costs.

More specifically, the 2020 survey showed that:

- 75 percent of respondents believe strategic initiatives around AI and automation are more important or significantly more important since the pandemic.

- 76 percent of respondents see an elevation in the importance of AI automation, because cutting wasteful spending will help them recuperate and grow faster.

- 90 percent have an AI automation strategy in place, up from 53 percent in Q3 2019.

- More than half (56 percent) reported ROIs twice as great (or more) on their automation technologies.

Within the health care industry, AI adoption spans a wide range of valuable-use cases, both clinical and non-clinical in nature and across stakeholder groups. For example, hospitals and health systems in particular are increasingly investing in development and deployment of AI to:

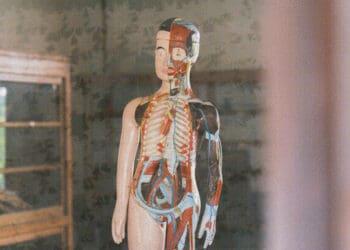

- Read medical images to improve the accuracy of diagnoses in specialties such as radiology, dermatology and anatomical pathology.

- Empower smartphones as diagnostic tools in dermatology, ophthalmology and developmental diseases.

- Combine the power of AI technology, big data and next-generation molecular sequencing to support precision-medicine solutions for diagnosing and treating cancer.

- Curate and de-identify data to enhance the value of electronic health record data for development of predictive analytics.

- Reduce diagnostic errors that currently account for nearly 60% of all medical errors and an estimated 40,000 to 80,000 deaths per year.

- Detect and prevent fraud, waste and abuse.

- Optimize workforce management and make more efficient use of the time of professionals and administrative staff by automating routine tasks such as electronic health record (EHR) documentation, administrative reporting and triaging of computerized tomography (CT) scans.

Evolving Government Oversight of AI Development in Health Care

Recognizing the unique nature and transformative potential of AI technology, the US Food and Drug Administration (FDA) has been a leader in blazing new trails, particularly through its efforts to adapt its own medical-device regulatory scheme to the unique nature and rapid pace of AI/ML technology innovation.

In January 2021, for example, the FDA released the Artificial Intelligence and Machine Learning (AI/ML) Software as a Medical Device Action Plan setting forth an oversight framework that seeks to balance the essence of AI’s “ability to learn from real-world use and experience, and its capability to improve its performance” with the importance of ensuring that such solutions “will deliver safe and effective software functionality that improves the quality of care that patients receive.” The action plan provides for a Pre-Certification program for AI at the lower end of the spectrum of technology sophistication and associated safety risk, while preserving the current, more rigorous premarket approval process for AI technology at the upper end of that spectrum.

Other domestic and international regulatory agencies, including the Office of the President of the United States, U.S. Office of Management and Budget, U.S. Department of Human Services, the European Commission, the U.K. and World Health Organization (WHO), have also published guidance addressing laws, policies and ethical principles that should be taken into account when considering use of AI applications for the delivery of health care services, drug and medical device research and development, health systems management, and the development of public health policy and public health surveillance.

In addition to addressing some of the ethical and liability challenges associated with AI in health care, the WHO has recommended a governance framework focusing on issues such as consent, data protection and sharing, specific private-sector and public-sector interests, and the development of policy and legislation. Similarly, in October 2021, the FDA joined forces with Health Canada and the United Kingdom’s Medicines and Healthcare products Regulatory Agency (MHRA) to identify 10 guiding principles as a foundation for the development of safe, effective and high-quality AI/ML-enabled medical devices that organizations such as the International Medical Device Regulators Forum can build upon.

These guiding principles emphasize the various measures that can help minimize the risks of health care AI, including taking a total-product-lifecycle approach that incorporates ongoing monitoring and testing in clinically relevant conditions; the use of good software engineering and security practices; ensuring the utility, diversity and integrity of the data sets used to build and train the AI model for the intended purpose; measuring performance of both the AI model and the “human in the loop” in the development and implementation of the model; and maintaining the transparency and understandability of the AI.

Framework for Board Oversight of AI Development and Deployment: the New Board Priority and Challenge

The vast power and promise of AI technology in health care and the quest to find novel ways to harness it creates a heightened sense of urgency and demand for an appropriate governance oversight framework. At the same time, the relatively uncharted AI innovation terrain presents significant challenges health care boards must tackle in developing that framework. Such challenges include:

- Managing a complex and uncertain labyrinth of other legal/regulatory and other enterprise risks associated with AI development and integration, investments and execution of essential transactions and relationships with other key stakeholders, particularly in the absence of a fully developed legal and regulatory scheme for doing so.

- Creating trust in the technology among patients, practitioners and the public.

- Maintaining integrity, availability, privacy and security of the robust data needed to train and deploy AI.

- Avoiding bias, discrimination and other ethical issues.

- Developing (and updating) processes and standards to manage known and unknown liabilities.

- Providing a clear pathway for addressing unexpected events.

To be effective, the governance oversight framework must be disciplined yet pragmatic and nimble, take an integrated approach to the bevy of associated enterprise risks, and align with the organization’s innovation strategy and its existing framework for oversight of innovation. From strategic planning, clinical quality and risk management, to social responsibility and ethics, there are many areas that boards need to take into consideration.

Current compliance and enterprise risk programs will provide a solid foundation for building a board oversight framework. However, such existing programs will need to be adapted, updated, expanded and continually reevaluated and re-adapted as needed to address the novel risks presented by AI innovation, to keep pace with its ever-changing nature and the rapid pace of its development, and to select partners and implement collaborations that align with the organization’s AI strategy and risk tolerance.

In building a framework for AI governance, hospital and health system boards should consider embracing the following priorities:

- Engendering trust in AI technology. Adoption and use of AI solutions by organizational leadership, providers, patients and the public must be predicated on a commitment to AI innovation that is clinically reliable, secure, fair, resilient and manageable. Achieving such trust must be at the very core of the board oversight framework.

- Creating a system of responsibility and accountability. The framework must clearly identify internal and external parties responsible for AI strategy, integration, risk management and crisis response, the nature and extent of board-delegated authority, and guidelines for responsibly exercising such authority.

- Articulating criteria for identifying, pursuing and managing alternative AI opportunities and associated risks relative to the intended uses. The board should establish specific, appropriate guidelines and criteria for identifying and assessing these key considerations for selecting AI innovation opportunities that align with its innovation strategy, risk tolerance, mission, goals, values and ethical principles.

- Selecting reliable business and technology partners and vendors that share the organization’s priorities and values. As an area that involves so many newcomers on the scene and legal, regulatory and technological shades of gray, AI innovation presents a heightened risk of misalignment, disagreements and miscommunications among partners and supporting vendors across the full enterprise risk spectrum. Managing these risks requires disciplined and thorough due diligence; negotiations with third parties should be used to identify and address potential conflicts and misalignment.

- Monitoring the safety, effectiveness and return on investment of current and future AI innovation opportunities throughout the product lifecycle. To support such monitoring, inventories should be maintained that track all AI-related opportunities that the organization is considering, actively pursuing and deploying. The framework for oversight should provide clear guidance for how and by whom identified concerns should be investigated and resolved.

- Establishing data-governance and stewardship principles to maintain data accuracy, completeness, privacy, security and lack of bias or discrimination. These principles, at a minimum, must conform to federal, state and international laws, regulations and standards, and incorporate other industry requirements and standards that go beyond minimal legal and regulatory requirements.

- Adapting to new challenges, opportunities, standards and requirements. The evolving nature of AI technology itself and of the applicable legal and regulatory framework calls for a nimble framework and ongoing education. Boards, business leadership and key staff must remain current with legal, regulatory, technological and operational developments that affect AI innovation and business transformation.

Summary and Conclusion

Without a doubt, responsible oversight of AI innovation is now and will remain a major governance imperative for hospital and health system boards. Such oversight will challenge boards to develop new and different approaches to governance that accommodate the novel, complex and ever-evolving nature of AI technology and the absence of a clear legal and regulatory scheme. There will be no one-size-fits all approach.

Boards will need the support and guidance of a multidisciplinary team to develop an oversight framework that is tailored to the organization’s innovation strategy, mission, vision, goals and culture, and that best positions the organization for successful and sustainable short- and long-term success. This will require:

- Working in close concert with executive management and business unit leaders to ensure visibility and alignment in approach across the executive suite.

- Seeking input and guidance from the intended end users across their systems so that important perspectives are well represented and can help drive ultimate success

- Engaging and coordinating board committees.

- Mobilizing a legal team comprised of the chief legal officers (CLOs), other in-house counsel who can bring a wealth of institutional knowledge to advise on priorities and make recommendations that align with the organization’s strategic goals and standards, and external legal counsel who can add additional depth of expertise and experience in governance, regulation of digital health innovation, and digital health collaborations and other transactions and relationships.

Taken together, these steps can help health care boards and leadership establish effective, clear and flexible governance approaches to the development, testing and adoption of AI tools.

Bernadette Broccolo

Bernadette Broccolo Michael W. Peregrine

Michael W. Peregrine