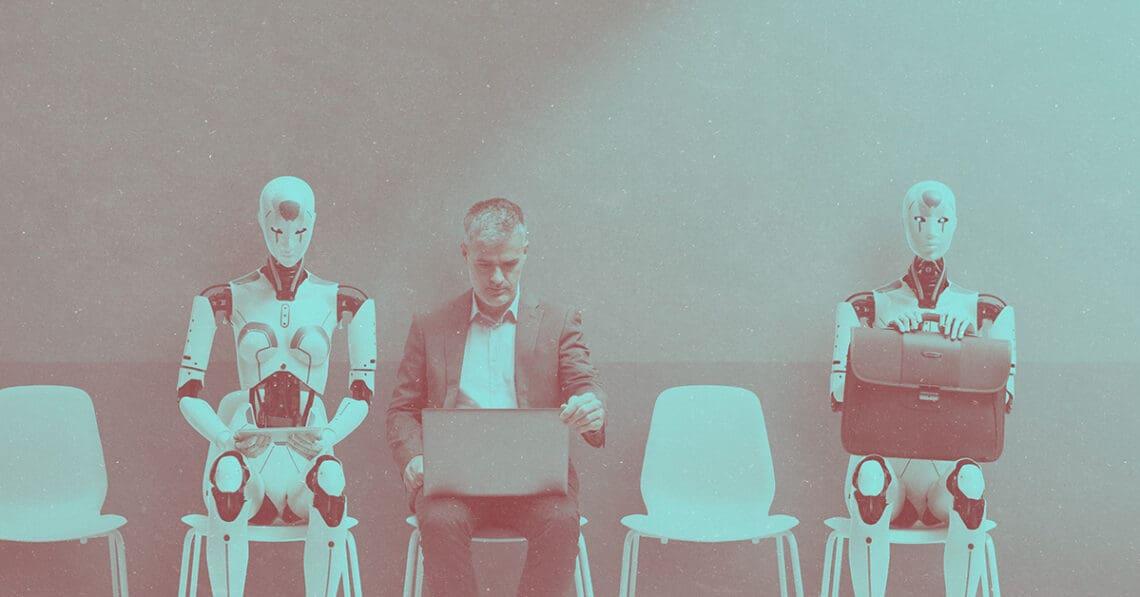

AI can make some workers more efficient, but is it ready yet to completely eliminate them? Some companies very publicly took a side and have since backtracked, including even rehiring people they laid off. CCI editorial director Jennifer L. Gaskin explores the legal, reputational and cultural risks that come with the AI boomerang.

AI will displace human workers in the hundreds of thousands or even millions. It’s a claim that, depending on whom you believe, is undersold or oversold. And while ultimately, time will tell who was right, some companies that have acted on the assumption that machines could replace people have begun to have second thoughts.

Klarna is the most public test case, but reporting and research suggest other companies are experiencing something similar: the AI boomerang. AI made jobs more efficient, so companies eliminated them only to find out later they needed people after all. The AI layoffs make front-page headlines; the quiet rehiring only makes the want-ads.

Companies’ reversal on AI-related layoffs opens them up to a cascade of risks, from the potential for legal action to public repudiation of leadership. And while these concerns may be unavoidable for companies already in the AI boomerang, good risk planning can help others avoid the trap.

The biggest lesson at this early stage of AI’s development and deployment is to understand what the technology can actually do right now without human intervention, says Asha Palmer, senior vice president of compliance solutions at training provider Skillsoft — not what it might be able to do years down the line.

“Some of the things that it does, it doesn’t do them well enough to remove the humans in the loop that need to understand whether it is doing the things it’s supposed to be doing,” Palmer said. “I think a lot of people prematurely made decisions on even what AI is capable of. It may do some of these things in the future, but it’s not ready to do those things on its own right now.”

New tech, same employment law risks

For companies that moved fast and are now backtracking, the consequences may extend well beyond embarrassment. The most immediate legal concern is also among the most straightforward: When a company lays off workers citing AI and then turns around and rehires for the same roles, it invites scrutiny of whether the original rationale was genuine.

“When you tell folks initially, ‘We’re doing layoffs and that means we’re eliminating your positions,’ but then shortly thereafter you fill the positions again … that always creates a little bit of ‘Wait a minute, did my employer tell me the truth?’” said Marissa Mastroianni, a member at Cole Schotz. “‘Because I looked, and now two months later, they’re hiring my position back. Does that mean that the reason for termination was pretextual, and instead I was actually fired due to [being in] a protected category?’”

The process itself — how the layoffs were done and how the rehiring is being done — could also draw scrutiny, Mastroianni warned, especially in scenarios where those who were let go or those asked back (or not asked back) are members of a protected group.

“If you terminated everyone in a classification but only rehired people who were under 40 years old, that’s going to create some age discrimination claims, potentially,” Mastroianni said. The important lesson: The selection criteria that governed the layoff and the selection criteria that govern the rehire require equal scrutiny.

Federal and state law regarding WARN act notifications add more complexity. The federal WARN Act requires 60 days’ advance notice before a qualifying mass layoff, though exceptions exist for unforeseeable business circumstances. If a company invoked one of those exceptions to shorten or eliminate the notice period — and then rehires within months — the reversal could call the exception into question. Mastroianni also noted that properly drafted severance agreements, while they come at a cost, remain one of the more effective tools for limiting exposure from future claims.

The state landscape is shifting rapidly. Colorado’s AI Act, currently expected to take effect June 30, requires employers to guard against algorithmic discrimination and imposes civil liability for violations. Illinois’ law, in effect since January, prohibits AI-driven discrimination in personnel decisions and requires notification when AI is used in hiring or firing, though it’s noteworthy that the state is still deciding on the rules. In New York City, a measure in effect since 2023 requires employers to conduct bias audits of automated employment decision tools used in hiring and promotion. A pending California bill would extend the state’s WARN Act specifically to AI-driven layoffs, requiring 90 days’ notice and lowering the workforce threshold that triggers the requirement. That’s to say nothing of the EU’s new risk-based framework under the AI Act, which may apply to companies in the US.

Mastroianni noted that while the legislative activity has been significant, seminal court decisions haven’t come down — yet.

“A lot of times … there is a lag between when the events actually happen and when they get litigated through the court system,” she said. “We’re still on the precipice of that. But between now and a year from now, I think we’re going to see a lot more litigation getting filed.”

What won’t show up on the balance sheet

Though they are still evolving, the legal risks are definable. The reputational and cultural damage from a botched AI layoff is harder to scope and slower to repair.

Being told a computer can do your job then being asked to come back is not a message that will be easy to hear, and the returning employees aren’t the only ones listening, Mastroianni warned, as potential job candidates are likely to have watched situations unfold from the outside.

“They’ve seen maybe in the news that this happened. And maybe they’re a little concerned: ‘Is this really a stable job opportunity for me or not,’” she asked.

The internal consequences are serious, Palmer said.

“When there’s a lack of trust, there is a rise in misconduct and other types of things — because it’s everyone for themselves,” she said. “Just how those individuals then interact with their peers and colleagues — it gets to be a tough place, from a culture standpoint, for companies.”

The talent pipeline damage may be the most durable. Palmer frames it as a skill supply chain problem: Mass layoffs that eliminate junior and mid-level roles not only reduce headcount today, but they cut off the development track that produces future senior leaders.

“Thinking about law, the reason I can examine cases and do the things that I could do in the courtroom when I was practicing was because I did it as a junior associate, and I was the one doing the document review,” Palmer said.

When that document review can be done more cost effectively by AI, what becomes of that junior lawyer and how do they gain the requisite skills to move up?

For companies already in the boomerang, Mastroianni says messaging is among the most important tools available — even if its limits are real and successfully reintegrating formerly laid-off workers isn’t easy.

“Sometimes it may not be repairable,” she said — but messaging is critically important: “You want to try to preempt those questions and thoughts that employees are naturally going to have.”

She also recommends strengthening employee benefit and incentive programs as part of any rehiring effort, noting that rebuilding trust is a long-term project.

Palmer’s prescription for companies that haven’t yet had to do the AI layoff two-step is a planning exercise some seem to have skipped in the rush to announce transformation: “What are our use cases? How are we using it? How do our people want to use it? And then: how does this impact humans, our skill supply chain, our go-to-market strategy? What are the risks?”

The irony, Palmer notes, is that none of this is new. Every significant technology disruption has required companies to think through the same questions of risk, workforce impact and long-term planning. AI is different in degree, not in kind.

“There’s always risks and efficiencies that you can see and achieve,” she said. “But humans are still part of this equation — for now.”

Jennifer L. Gaskin is editorial director of Corporate Compliance Insights. A newsroom-forged journalist, she began her career in community newspapers. Her first assignment was covering a county council meeting where the main agenda item was whether the clerk's office needed a new printer (it did). Starting with her early days at small local papers, Jennifer has worked as a reporter, photographer, copy editor, page designer, manager and more. She joined the staff of Corporate Compliance Insights in 2021.

Jennifer L. Gaskin is editorial director of Corporate Compliance Insights. A newsroom-forged journalist, she began her career in community newspapers. Her first assignment was covering a county council meeting where the main agenda item was whether the clerk's office needed a new printer (it did). Starting with her early days at small local papers, Jennifer has worked as a reporter, photographer, copy editor, page designer, manager and more. She joined the staff of Corporate Compliance Insights in 2021.