Good GRC requires data. But just how current and reliable is it? Most businesses are producing something less than “fresh bread,” argue Paresh Chiney and Charles Soha of StoneTurn. From this perspective, they present their recipe for enhancing compliance and risk management.

When designing and building a compliance and risk data infrastructure, the term “data lake” is commonly used by technology vendors, evangelized by cloud service providers and embedded within data analyst job descriptions. While convenient and accurate to think of enterprise compliance and risk data management as a centralized reservoir of vital resources to drive an organization forward in the digital economy, the analogy oversimplifies the complexities of both effective implementation and ongoing management.

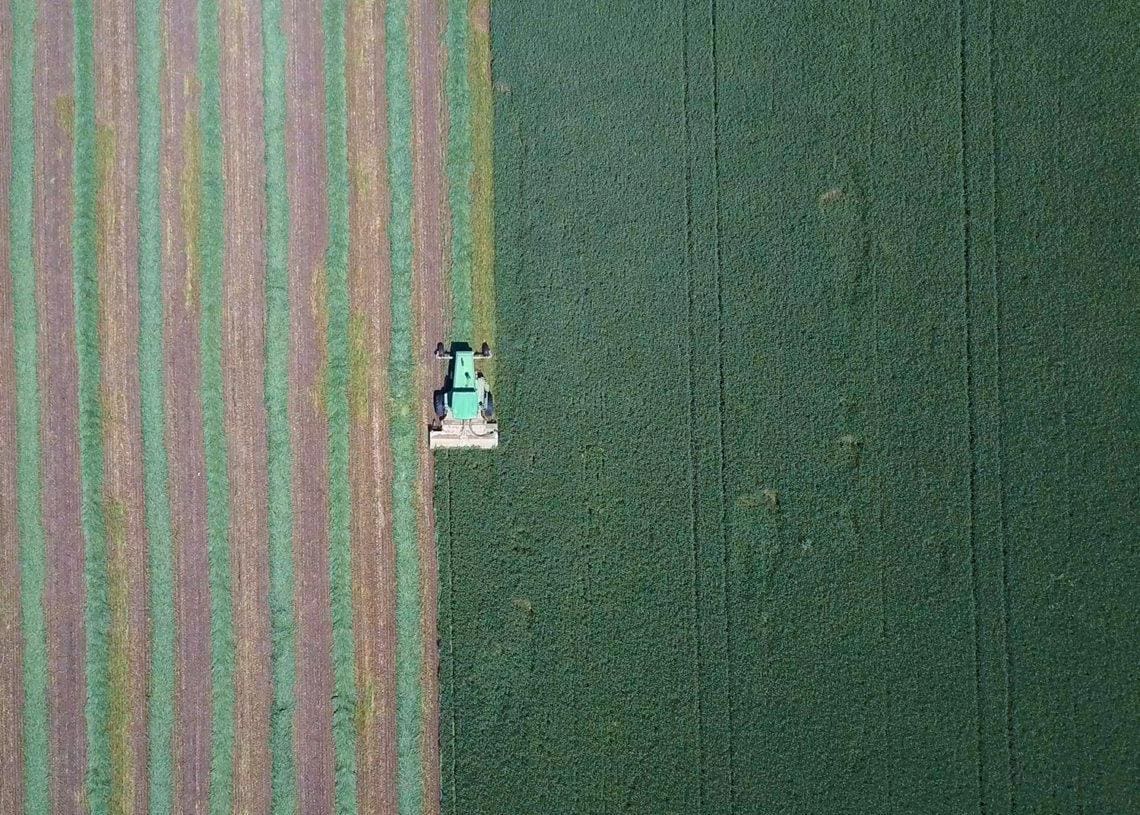

Not a Lake but a Farm

An optimized risk and compliance data infrastructure should be a consciously cultivated ecosystem that understands the current recordkeeping environment, harnesses the dedicated commitment within senior management to instill consistent operational behaviors and lays careful plans.

This is not a lake. Perhaps a more potent analogy is a data farm; in this case, raising wheat to make bread. That is, your data farm’s end goal should be providing fresh and delicious, sliced bread in the form of actionable insights to the C-suite, stakeholders and regulators.

Running with this analogy, we submit that businesses need to resist the temptation to assume that good bread — or good data management — is already on the table. Too often it is not, most often owing to a lack of management buy-in or myopic cost and timeline considerations.

Therefore, we suggest that risk and compliance executives revisit their fundamentals. To make and mill fresh bread, your company needs to go back and:

- Survey the land: Understand what applications exist in the organization and where the data is stored to prioritize efforts

- Assess the crop: Understand what data is relevant and whether critical data elements are captured to perform risk analytics, while consolidating and rationalizing sources

- Achieve consistent yields: Implement data governance and data quality controls

Data Storage: Survey the Land

A primary consideration when building enterprise data infrastructure, including risk data repositories, is how data is stored and where it is located geographically. There are numerous variables in the cost-benefit equation when assessing whether to use traditional on-premises data servers and storage vs. cloud-based solutions.

Step One: Figure Out Your Mix of Cloud and On-Premises

In general, cloud-based solutions are preferred in high- and rapid-growth business environments because the marginal costs of additional storage, maintenance, disaster recovery and security reduce with scale. On the flip side, however, it can be prohibitively costly to migrate data between providers and using cloud storage introduces third-party contract risk.

An on-premises solution may be more suitable for larger, more complex, established organizations that require full control over their data and wish to manage their own cybersecurity and disaster recovery or those with jurisdictional compliance requirements that govern where data can be stored, which is difficult to control in the cloud.

Step Two: Figure out How Many Applications Are up and Running in Your Environment

An application inventory can range from a dozen to several thousand systems or applications, depending on an organization’s size, complexity and policies and enforcement regarding the tools being used. From there, entities should sort these applications into different prioritization buckets, such as determining whether they are owned in-house or hosted by a third party and ranking the applications’ criticality to business operations, risk management or compliance.

Third-party hosted applications often pose administrative, contractual, or operational hurdles to consolidate data for risk management functions and may require contractual changes to allow an organization to get a customized cadence of data feeds or easy-to-access reports from a web browser. Classification for business criticality is often directly correlated with business continuity and disaster recovery practices – so a risk and compliance managers should collaborate with relevant IT counterparts to avoid reinventing the milling stone.

Data Availability: Assess the Crop

After determining storage locations and defining the universe of business applications, organizations need to assess the relevancy of data within each application to ongoing risk and compliance management procedures and long-term goals. This prioritization roadmap determines:

- What data pipelines do we need to build?

- Which data pipelines should we attempt to consolidate and decommission?

- What should business units consider in terms of changing their processes to provide greater value to risk management and compliance initiatives?

Part of this exercise may be to ask, “How many expense management systems do I have?” There could be a variety of legitimate reasons for having multiple, such as recent M&A activity that is still integrating legacy technologies or the ease of transacting locally with customers on specific platforms in certain jurisdictions. An even stronger argument might be that local privacy laws necessitate segregated data infrastructure where risk-data would need to be sanitized and consolidated separately anyway, thereby still requiring an investment to build a data pipeline.

A weak argument for maintaining multiple expense platforms might be that a European business unit prefers a system because it automatically calculates value-added tax (VAT) when more likely than not, an alternative North American counterpart could also do so with a cheap, minor, one-time software update.

In general, efficiency and data quality improve within risk-management activities when data is sourced from fewer systems bearing the same capabilities. There may be exceptions. For example, developing a robust risk-management architecture to consolidate the evaluation and measurement of third-party risk management (TPRM) could be an expensive proposition. In some cases, running multiple smaller platforms becomes the optimal solution.

Identifying risk- and compliance-relevance is also important. For example, email and internal messaging applications might be paramount to successful business development, customer satisfaction and revenue generation. However, the data records that email generates are typically lower on the risk-management totem pole and are difficult to convert to a structured format that delivers quantitative data for decision making. In contrast, customer contracts and agreements are critical to evaluate performance against service-level agreements (SLAs), to mitigate contract compliance risks and to understand revenue collection efficacy, among other measures that are crucial to risk managers.

Organizations may already have several data repositories to support various compliance and first line-of-defense business control functions. These are, on the surface, great opportunities to achieve efficiency in building risk infrastructure by leveraging existing databases but should be done with caution. In many cases, the data has been transformed and manipulated for specific, often esoteric purposes that can result in faulty or incomplete inferences, so it is critical to understand data lineage and establish a single source of truth.

For example, a risk manager might attempt to consume data from a financial reporting repository that has all its incoming cash transactions. However, if all the currencies in that system are standardized to U.S. dollars, it may be cumbersome to convert those amounts into local currencies to validate if a customer was billed correctly.

Data Governance: ‘Achieve Consistent Yield’

After hashing out storage, relevant and available data sources and lineage, the focus shifts to data quality and data consistency. In our farm analogy, it’s OK to grow both wheat and rye, but we must be able to distinguish between the two, using them in the right circumstances.

This boils down to data governance, which is a multiphase, evolving process in most organizations with both top-down and bottom-up components. A top-down approach could be to start with an end-goal product, such as a report on control effectiveness broken down by deficiency types.

Taxonomy is crucial. For example, if an audit department tests some key controls and their system of record classifies them as preventive, detective, manual or automated but does not attribute whether their testing was around existence, design, effectiveness or rationale, it will not be possible to report on the latter deficiency types without mining through historical audit reports to glean those classifications.

Such results will be expensive to produce with inherent limitations. Doing a gap analysis that reconciles reporting requirements to available data elements will allow organizations to start filling gaps to meet their risk-monitoring and compliance management goals concurrently with their bottom-up efforts.

Bottom-up assessments start with identifying critical data elements that influence decisions around risk. Here it is essential to evaluate the range of values around each critical data element making decisions such as:

- Whether a date field should be changeable once submitted

- If certain memo and free-from text fields should be supplanted with a drop-down or picklist to facilitate categorical inferences

- Whether electronic functional data quality checks should be implemented before a record is even recorded into a system (e.g., mandating that invoice date must be earlier than payment date)

After establishing controls around data conformance and integrity, changes to operational behaviors outside of risk-management may be necessary, therefore it is a critical, pre-requisite to have business leadership buy-in at the onset of any data governance effort.

Lastly, to ensure data is used and interpreted uniformly across different business and risk functions, it is prudent to compile a data dictionary that defines critical data elements and provides a legend for any codified values. Finally, you are farming high-quality data, and you’re ready to begin looking at advanced analytics.

No More Stale Bread

It’s not a data lake you need; it’s a data farm. By surveying the land, assessing the crop and taking steps to deliver a consistent yield (reviewing their fundamentals), businesses will shift from having to deal with stale or even moldy bread to farm-to-table freshness. Your business will be equipped to make better decisions based on current information plus operate with lower risk. Your risk and compliance managers will be able stand tall in board rooms or in front of regulators, wowing with them with freshly baked, sliced bread. The difference between those who perform consistently and those who left with stale bread is securing and managing a plentiful and sustainable supply of the ingredients.

Paresh Chiney

Paresh Chiney Chuck Soha

Chuck Soha